After the whole Plait fiasco with the sum of the infinite series of natural numbers, I decided it would interesting to dig into the real math behind that mess. That means digging in to the Riemann function, and the concept of analytic continuation.

A couple of caveats before I start:

- this is the area of math where I’m at my worst. I am not good at analysis. I’m struggling to understand this stuff well enough to explain it. If I screw up, please let me know in the comments, and I’ll do my best to update the main post promptly.

- This is way more complicated than most of the stuff I write on this blog. Please be patient, and try not to get bogged down. I’m doing my best to take something that requires a whole lot of specialized knowledge, and explain it as simply as I can.

What I’m trying to do here is to get rid of some of the mystery surrounding this kind of thing. When people think about math, they frequently get scared. They say things like “Math is hard, I can’t hope to understand it.”, or “Math produces weird results that make no sense, and there’s no point in my trying to figure out what it means, because if I do, my brain will explode. Only a super-genius geek can hope to understand it!”

That’s all rubbish.

Math is complicated, because it covers a whole lot of subjects. To understand the details of a particular branch of math takes a lot of work, because it takes a lot of special domain knowledge. But it’s not fundamentally different from many other things.

I’m a professional software engineer. I did my PhD in computer science, specializing in programming languages and compiler design. Designing and building a compiler is hard. To be able to do it well and understand everything that it does takes years of study and work. But anyone should be able to understand the basic concepts of what it does, and what the problems are.

I’ve got friends who are obsessed with baseball. They talk about ERAs, DIERAs, DRSs, EQAs, PECOTAs, Pythagorean expectations, secondary averages, UZRs… To me, it’s a huge pile of gobbledygook. It’s complicated, and to understand what any of it means takes some kind of specialized knowledge. For example, I looked up one of the terms I saw in an article by a baseball fan: “Peripheral ERA is the expected earned run average taking into account park-adjusted hits, walks, strikeouts, and home runs allowed. Unlike Voros McCracken’s DIPS, hits allowed are included.” I have no idea what that means. But it seems like everyone who loves baseball – including people who think that they can’t do their own income tax return because they don’t understand how to compute percentages – understand that stuff. They care about it, and since it means something in a field that they care about, they learn it. It’s not beyond their ability to understand – it just takes some background to be able to make sense of it. Without that background, someone like me feels lost and clueless.

That’s the way that math is. When you go to look at a result from complex analysis without knowing what complex analysis is, it looks like terrifyingly complicated nonsensical garbage, like “A meromorphic function is a function on an open subset of the complex number plain which is holomorphic on its domain except at a set of isolated points where it must have a Laurent series”.

And it’s definitely not easy. But understanding, in a very rough sense, what’s going on and what it means is not impossible, even if you’re not a mathematician.

Anyway, what the heck is the Riemann zeta function?

It’s not easy to give even the simplest answer of that in a meaningful way.

Basically, Riemann Zeta is a function which describes fundamental properties of the prime numbers, and therefore of our entire number system. You can use the Riemann Zeta to prove that there’s no largest prime number; you can use it to talk about the expected frequency of prime numbers. It occurs in various forms all over the place, because it’s fundamentally tied to the structure of the realm of numbers.

The starting point for defining it is a power series defined over the complex numbers (note that the parameter we use is  instead of a more conventional

instead of a more conventional  : this is a way of highlighting the fact that this is a function over the complex numbers, not over the reals).

: this is a way of highlighting the fact that this is a function over the complex numbers, not over the reals).

This function  is not the Riemann function!

is not the Riemann function!

The Riemann function is something called the analytic continuation of  . We’ll get to that in a moment. Before doing that; why the heck should we care? I said it talks about the structure of numbers and primes, but how?

. We’ll get to that in a moment. Before doing that; why the heck should we care? I said it talks about the structure of numbers and primes, but how?

The zeta function actually has a lot of meaning. It tells us something fundamental about properties of the system of real numbers – in particular, about the properties of prime numbers. Euler proved that Zeta is deeply connected to the prime numbers, using something called Euler’s identity. Euler’s identity says that for all integer values:

Which is a way of saying that the Riemann function can describe the probability distribution of the prime numbers.

To really understand the Riemann Zeta, you need to know how to do analytic continuation. And to understand that, you need to learn a lot of number theory and a lot of math from the specialized field called complex analysis. But we can describe the basic concept without getting that far into the specialized stuff.

What is an analytical continuation? This is where things get really sticky. Basically, there are places where there’s one way of solving a problem which produces a diverging infinite series. When that happens you say there’s no solution, that thepoint where you’re trying to solve it isn’t in the domain of the problem. But if you solve it in a different way, you can find a way of getting a solution that works. You’re using an analytic process to extend the domain of the problem, and get a solution at a point where the traditional way of solving it wouldn’t work.

A nice way to explain what I mean by that requires taking a

diversion, and looking at a metaphor. What we’re talking about here isn’t analytical continuation; it’s a different way of extending the domain of a function, this time in the realm of the real numbers. But as an example, it illustrates the concept of finding a way to get the value of a function in a place where it doesn’t seem to be defined.

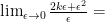

In math, we like to play with limits. One example of that is in differential calculus. What we do in differential

calculus is look at continuous curves, and ask: at one specific location on the curve, what’s the slope?

If you’ve got a line, the slope is easy to determine. Take any two points on the line:  , where

, where  . Then the slope is

. Then the slope is  . It’s easy, because for a line, the slope never changes.

. It’s easy, because for a line, the slope never changes.

If you’re looking at a curve more complex than line, then slopes get harder, because they’re constantly changing. If you’re looking at  , and you zoom in and look at it very close to

, and you zoom in and look at it very close to  , it looks like the slope is very close to 0. If you look at it close to 1, it looks like it’s around 2. If you look at it at x=10, it looks a bit more than 20. But there are no two points where it’s exactly the same!

, it looks like the slope is very close to 0. If you look at it close to 1, it looks like it’s around 2. If you look at it at x=10, it looks a bit more than 20. But there are no two points where it’s exactly the same!

So how can you talk about the slope at a particular point  ? By using a limit. You pick a point really close to

? By using a limit. You pick a point really close to  , and call it

, and call it  . Then an approximate value of the slope at

. Then an approximate value of the slope at  is:

is:

The smaller epsilon gets, the closer your approximation gets. But you can’t actually get to  , because if you did, that slope equation would have 0 in the denominator, and it wouldn’t be defined! But it is defined for all non-zero values of

, because if you did, that slope equation would have 0 in the denominator, and it wouldn’t be defined! But it is defined for all non-zero values of  . No matter how small, no matter how close to zero, the slope is defined. But at zero, it’s no good: it’s undefined.

. No matter how small, no matter how close to zero, the slope is defined. But at zero, it’s no good: it’s undefined.

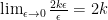

So we take a limit. As  gets smaller and smaller, the slope gets closer and closer to some value. So we say that the slope at the point – at the exact place where the denominator of that fraction becomes zero – is defined as:

gets smaller and smaller, the slope gets closer and closer to some value. So we say that the slope at the point – at the exact place where the denominator of that fraction becomes zero – is defined as:

(Note: the original version of the previous line had a missing “-“. Thanks to commenter Thinkeye for catching it.)

Since  is getting closer and closer to zero,

is getting closer and closer to zero,  is getting smaller much faster; so we can treat it as zero:

is getting smaller much faster; so we can treat it as zero:

So at any point  , the slope of

, the slope of  is

is  . Even though computing that involves dividing by zero, we’ve used an analytical method to come up with a meaningful and useful value at

. Even though computing that involves dividing by zero, we’ve used an analytical method to come up with a meaningful and useful value at  . This doesn’t mean that you can divide by zero. You cannot conclude that

. This doesn’t mean that you can divide by zero. You cannot conclude that  . But for this particular analytical setting, you can come up with a meaningful solution to a problem that involves, in some sense, dividing by zero.

. But for this particular analytical setting, you can come up with a meaningful solution to a problem that involves, in some sense, dividing by zero.

The limit trick in differential calculus is not analytic continuation. But it’s got a tiny bit of the flavor.

Moving on: the idea of analytic continuation comes from the field of complex analysis. Complex analysis studies a particular class of functions in the complex number plane. It’s not one of the easier branches of mathematics, but it’s extremely useful. Complex analytic functions show up all over the place in physics and engineering.

In complex analysis, people focus on a particular group of functions that are called analytic, holomorphic, and meromorphic. (Those three are closely related, but not synonymous.).

A holomorphic function is a function over complex variables, which has one

important property. The property is almost like a kind of abstract smoothness. In the simplest case, suppose that we have a complex equation in a single variable, and the domain of this function is  . Then it’s holomorphic if, and only if, for every point

. Then it’s holomorphic if, and only if, for every point  , the function is complex differentiable in some neighborhood of points around

, the function is complex differentiable in some neighborhood of points around  .

.

(Differentiable means, roughly, that using a trick like the one we did above, we can take the slope (the derivative) around  . In the complex number system, “differentiable” is a much stronger condition than it would be in the reals. In the complex realm, if something is differentiable, then it is infinitely differentiable. In other words, given a complex equation, if it’s differentiable, that means that I can create a curve describing its slope. That curve, in turn, will also be differentiable, meaning that you can derive an equation for its slope. And that curve will be differentiable. Over and over, forever: the derivative of a differentiable curve in the complex number plane will always be differentiable.)

. In the complex number system, “differentiable” is a much stronger condition than it would be in the reals. In the complex realm, if something is differentiable, then it is infinitely differentiable. In other words, given a complex equation, if it’s differentiable, that means that I can create a curve describing its slope. That curve, in turn, will also be differentiable, meaning that you can derive an equation for its slope. And that curve will be differentiable. Over and over, forever: the derivative of a differentiable curve in the complex number plane will always be differentiable.)

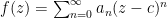

If you have a differentiable curve in the complex number plane, it’s got one really interesting property: it’s representable as a power series. (This property is what it means for a function to be called analytic; all holomorphic functions are analytic.) That is, a function  is holomorphic for a set

is holomorphic for a set  if, for all points

if, for all points  , you can represent the value of the function as a power series for a disk of values around

, you can represent the value of the function as a power series for a disk of values around  :

:

In the simplest case, the constant  is 0, and it’s just:

is 0, and it’s just:

(Note: In the original version of this post, I miswrote the basic pattern of a power series, and put both  and

and  in the base. Thanks to John Armstrong for catching it.)

in the base. Thanks to John Armstrong for catching it.)

The function that we wrote, above, for the base of the zeta function is exactly this kind of power series. Zeta is an analytic function for a particular set of values. Not all values in the complex number plane; just for a specific subset.

If a function  is holomorphic, then the strong differentiability of it leads to another property. There’s a unique extension to it that expands its domain. The expansion always produces the same value for all points that are within the domain of

is holomorphic, then the strong differentiability of it leads to another property. There’s a unique extension to it that expands its domain. The expansion always produces the same value for all points that are within the domain of  . It also produces exactly the same differentiability properties. But it’s also defined on a larger domain than

. It also produces exactly the same differentiability properties. But it’s also defined on a larger domain than  was. It’s essentially what

was. It’s essentially what  would be if its domain weren’t so limited. If

would be if its domain weren’t so limited. If  is the domain of

is the domain of  , then for any given domain,

, then for any given domain,  , where

, where  , there’s exactly one function with domain

, there’s exactly one function with domain  that’s an analytic continuation of

that’s an analytic continuation of  .

.

Computing analytic continuations is not easy. This is heavy enough already, without getting into the details. But the important thing to understand is that if we’ve got a function  with an interesting set of properties, we’ve got a method that might be able to give us a new function

with an interesting set of properties, we’ve got a method that might be able to give us a new function  that:

that:

- Everywhere that

was defined,

was defined,  .

.

- Everywhere that

was differentiable,

was differentiable,  is also differentiable.

is also differentiable.

- Everywhere that

could be computed as a sum of an infinite power series,

could be computed as a sum of an infinite power series,  can also be computed as a sum of an infinite power series.

can also be computed as a sum of an infinite power series.

-

is defined in places where

is defined in places where  and the power series for

and the power series for  is not.

is not.

So, getting back to the Riemann Zeta function: we don’t have a proper closed form equation for zeta. What we have is the power series of the function that zeta is the analytic continuation of:

If  , then the series for that function expands to:

, then the series for that function expands to:

The power series is undefined at this point; the base function that we’re using, that zeta is the analytic continuation of, is undefined at  . The power series is an approximation of the zeta function, which works over some specific range of values. But it’s a flawed approximation. It’s wrong about what happens at

. The power series is an approximation of the zeta function, which works over some specific range of values. But it’s a flawed approximation. It’s wrong about what happens at  . The approximation says that value at

. The approximation says that value at  should be a non-converging infinite sum. It’s wrong about that. The Riemann zeta function is defined at that point, even though the power series is not. If we use a different method for computing the value of the zeta function at

should be a non-converging infinite sum. It’s wrong about that. The Riemann zeta function is defined at that point, even though the power series is not. If we use a different method for computing the value of the zeta function at  – a method that doesn’t produce an incorrect result! – the zeta function has the value

– a method that doesn’t produce an incorrect result! – the zeta function has the value  at

at  .

.

Note that this is a very different statement from saying that the sum of that power series is  at

at  . We’re talking about fundamentally different functions! The Riemann zeta function at

. We’re talking about fundamentally different functions! The Riemann zeta function at  does not expand to the power series that we used to approximate it.

does not expand to the power series that we used to approximate it.

In physics, if you’re working with some kind of system that’s described by a power series, you can come across the power series that produces the sequence that looks like the sum of the natural numbers. If you do, and if you’re working in the complex number plane, and you’re working in a domain where that power series occurs, what you’re actually using isn’t really the power series – you’re playing with the analytic zeta function, and that power series is a flawed approximation. It works most of the time, but if you use it in the wrong place, where that approximation doesn’t work, you’ll see the sum of the natural numbers. In that case, you get rid of that sum, and replace it with the correct value of the actual analytic function, not with the incorrect value of applying the power series where it won’t work.

Ok, so that warning at the top of the post? Entirely justified. I screwed up a fair bit at the end. The series that defines the value of the zeta function for some values, the series for which the Riemann zeta is the analytical continuation? It’s not a power series. It’s a series alright, but not a power series, and not the particular kind of series that defines a holomorphic or analytical function.

The underlying point, though, is still the same. That series (not power series, but series) is a partial definition of the Riemann zeta function. It’s got a limited domain, where the Riemann zeta’s domain doesn’t have the same limits. The series definition still doesn’t work at  . The series is still undefined at

. The series is still undefined at  . At

. At  , the series expands to

, the series expands to  , which doesn’t converge, and which doesn’t add up to any finite value, -1/12 or otherwise. That series does not have a value at

, which doesn’t converge, and which doesn’t add up to any finite value, -1/12 or otherwise. That series does not have a value at  . No matter what you do, that equation – the definition of that series – does not work at

. No matter what you do, that equation – the definition of that series – does not work at  . But the Riemann Zeta function is defined in places where that equation isn’t. Riemann Zeta at

. But the Riemann Zeta function is defined in places where that equation isn’t. Riemann Zeta at  is defined, and its value is

is defined, and its value is  .

.

Despite my mistake, the important point is still that last sentence. The value of the Riemann zeta function at  is not the sum of the set of natural numbers. The equation that produces the sequence doesn’t work at

is not the sum of the set of natural numbers. The equation that produces the sequence doesn’t work at  . The definition of the Riemann zeta function doesn’t say that it should, or that the sum of the natural numbers is

. The definition of the Riemann zeta function doesn’t say that it should, or that the sum of the natural numbers is  . It just says that the first approximation of the Riemann zeta function for some, but not all values, is given by a particular infinite sum. In the places where that sum works, it gives the value of zeta; in places where that sum doesn’t work, it doesn’t.

. It just says that the first approximation of the Riemann zeta function for some, but not all values, is given by a particular infinite sum. In the places where that sum works, it gives the value of zeta; in places where that sum doesn’t work, it doesn’t.

Like this:

Like Loading...

is well-ordered if there exists a total ordering

on the set, with the additional property that for any subset

,

has a smallest element.

that you can define, describe, or compute,

is not the well-ordering relation for the reals.